Image by Author

In the ever-evolving landscape of technology, the surge of large language models (LLMs) has been nothing short of a revolution. Tools like ChatGPT and Google BARD are at the forefront, showcasing the art of the possible in digital interaction and application development.

The success of models such as ChatGPT has spurred a surge in interest from companies eager to harness the capabilities of these advanced language models.

Yet, the true power of LLMs doesn’t just lie in their standalone abilities.

Their potential is amplified when they are integrated with additional computational resources and knowledge bases, creating applications that are not only smart and linguistically skilled but also richly informed by data and processing power.

And this integration is exactly what LangChain tries to assess.

Langchain is an innovative framework crafted to unleash the full capabilities of LLMs, enabling a smooth symbiosis with other systems and resources. It’s a tool that gives data professionals the keys to construct applications that are as intelligent as they are contextually aware, leveraging the vast sea of information and computational variety available today.

It’s not just a tool, it’s a transformational force that is reshaping the tech landscape.

This prompts the following question:

How will LangChain redefine the boundaries of what LLMs can achieve?

Stay with me and let’s try to discover it all together.

LangChain is an open-source framework built around LLMs. It provides developers with an arsenal of tools, components, and interfaces that streamline the architecture of LLM-driven applications.

However, it is not just another tool.

Working with LLMs can sometimes feel like trying to fit a square peg into a round hole.

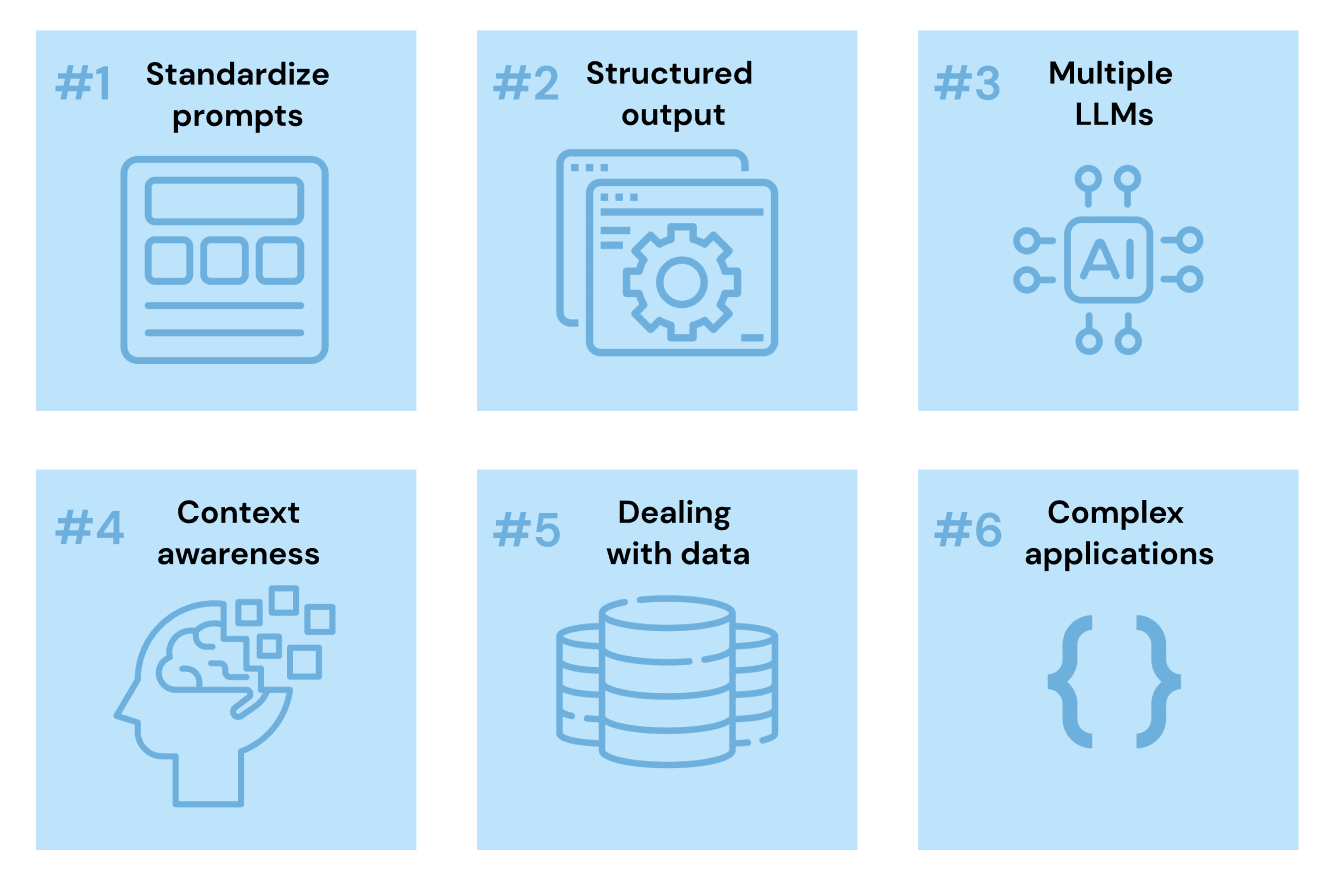

There are some common problems that I bet most of you have already experienced yourself:

- How to standardize prompt structures.

- How to make sure LLM’s output can be used by other modules or libraries.

- How to easily switch from one LLM model to another.

- How to keep some record of memory when needed.

- How to deal with data.

All these problems bring us to the following question:

How to develop a whole complex application being sure that the LLM model will behave as expected.

The prompts are riddled with repetitive structures and text, the responses are as unstructured as a toddler’s playroom, and the memory of these models? Let’s just say it’s not exactly elephantine.

So… how can we work with them?

Trying to develop complex applications with AI and LLMs can be a complete headache.

And this is where LangChain steps in as the problem-solver.

At its core, LangChain is made up of several ingenious components that allow you to easily integrate LLM in any development.

LangChain is generating enthusiasm for its ability to amplify the capabilities of potent large language models by endowing them with memory and context. This addition enables the simulation of “reasoning” processes, allowing for the tackling of more intricate tasks with greater precision.

For developers, the appeal of LangChain lies in its innovative approach to creating user interfaces. Rather than relying on traditional methods like drag-and-drop or coding, users can articulate their needs directly, and the interface is constructed to accommodate those requests.

It is a framework designed to supercharge software developers and data engineers with the ability to seamlessly integrate LLMs into their applications and data workflows.

So this brings us to the following question…

Knowing current LLMs present 6 main problems, now we can see how LangChain is trying to assess them.

Image by Author

1. Prompts are way too complex now

Let’s try to recall how the concept of prompt has rapidly evolved during these last months.

It all started with a simple string describing an easy task to perform:

Hey ChatGPT, can you please explain to me how to plot a scatter chart in Python?

However, over time people realized this was way too simple. We were not providing LLMs enough context to understand their main task.

Today we need to tell any LLM much more than simply describing the main task to fulfill. We have to describe the AI’s high-level behavior, the writing style and include instructions to make sure the answer is accurate. And any other detail to give a more contextualized instruction to our model.

So today, rather than using the very first prompt, we would submit something more similar to:

Hey ChatGPT, imagine you are a data scientist. You are good at analyzing data and visualizing it using Python.

Can you please explain to me how to generate a scatter chart using the Seaborn library in Python

Right?

However, as most of you have already realized, I can ask for a different task but still keep the same high-level behavior of the LLM. This means that most parts of the prompt can remain the same.

This is why we should be able to write this part just one time and then add it to any prompt you need.

LangChain fixes this repeat text issue by offering templates for prompts.

These templates mix the specific details you need for your task (asking exactly for the scatter chart) with the usual text (like describing the high-level behavior of the model).

So our final prompt template would be:

Hey ChatGPT, imagine you are a data scientist. You are good at analyzing data and visualizing it using Python.

Can you please explain to me how to generate a using the library in Python?

With two main input variables:

- type of chart

- python library

2. Responses Are Unstructured by Nature

We humans interpret text easily, This is why when chatting with any AI-powered chatbot like ChatGPT, we can easily deal with plain text.

However, when using these very same AI algorithms for apps or programs, these answers should be provided in a set format, like CSV or JSON files.

Again, we can try to craft sophisticated prompts that ask for specific structured outputs. But we cannot be 100% sure that this output will be generated in a structure that is useful for us.

This is where LangChain’s Output parsers kick in.

This class allows us to parse any LLM response and generate a structured variable that can be easily used. Forget about asking ChatGPT to answer you in a JSON, LangChain now allows you to parse your output and generate your own JSON.

3. LLMs Have No Memory – but some applications might need them to.

Now just imagine you are talking with a company’s Q&A chatbot. You send a detailed description of what you need, the chatbot answers correctly and after a second iteration… it is all gone!

This is pretty much what happens when calling any LLM via API. When using GPT or any other user-interface chatbot, the AI model forgets any part of the conversation the very moment we pass to our next turn.

They do not have any, or much, memory.

And this can lead to confusing or wrong answers.

As most of you have already guessed, LangChain again is ready to come to help us.

LangChain offers a class called memory. It allows us to keep the model context-aware, be it keeping the whole chat history or just a summary so it does not get any wrong replies.

4. Why choose a single LLM when you can have them all?

We all know OpenAI’s GPT models are still in the realm of LLMs. However… There are plenty of other options out there like Meta’s Llama, Claude, or Hugging Face Hub open-source models.

If you only design your program for one company’s language model, you’re stuck with their tools and rules.

Using directly the native API of a single model makes you depend totally on them.

Imagine if you built your app’s AI features with GPT, but later found out you need to incorporate a feature that is better assessed using Meta’s Llama.

You will be forced to start all over from scratch… which is not good at all.

LangChain offers something called an LLM class. Think of it as a special tool that makes it easy to change from one language model to another, or even use several models at once in your app.

This is why developing directly with LangChain allows you to consider multiple models at once.

5. Passing Data to the LLM is Tricky

Language models like GPT-4 are trained with huge volumes of text. This is why they work with text by nature. However, they usually struggle when it comes to working with data.

Why? You might ask.

Two main issues can be differentiated:

- When working with data, we first need to know how to store this data, and how to effectively select the data we want to show to the model. LangChain helps with this issue by using something called indexes. These let you bring in data from different places like databases or spreadsheets and set it up so it’s ready to be sent to the AI piece by piece.

- On the other hand, we need to decide how to put that data into the prompt you give the model. The easiest way is to just put all the data directly into the prompt, but there are smarter ways to do it, too.

In this second case, LangChain has some special tools that use different methods to give data to the AI. Be it using direct Prompt stuffing, which allows you to put the whole data set right into the prompt, or using more advanced options like Map-reduce, Refine, or Map-rerank, LangChain eases the way we send data to any LLM.

6. Standardizing Development Interfaces

It’s always tricky to fit LLMs into bigger systems or workflows. For instance, you might need to get some info from a database, give it to the AI, and then use the AI’s answer in another part of your system.

LangChain has special features for these kinds of setups.

- Chains are like strings that tie different steps together in a simple, straight line.

- Agents are smarter and can make choices about what to do next, based on what the AI says.

LangChain also simplifies this by providing standardized interfaces that streamline the development process, making it easier to integrate and chain calls to LLMs and other utilities, enhancing the overall development experience.

In essence, LangChain offers a suite of tools and features that make it easier to develop applications with LLMs by addressing the intricacies of prompt crafting, response structuring, and model integration.

LangChain is more than just a framework, it’s a game-changer in the world of data engineering and LLMs.

It’s the bridge between the complex, often chaotic world of AI and the structured, systematic approach needed in data applications.

As we wrap up this exploration, one thing is clear:

LangChain is not just shaping the future of LLMs, it’s shaping the future of technology itself.

Josep Ferrer is an analytics engineer from Barcelona. He graduated in physics engineering and is currently working in the Data Science field applied to human mobility. He is a part-time content creator focused on data science and technology. You can contact him on LinkedIn, Twitter or Medium.