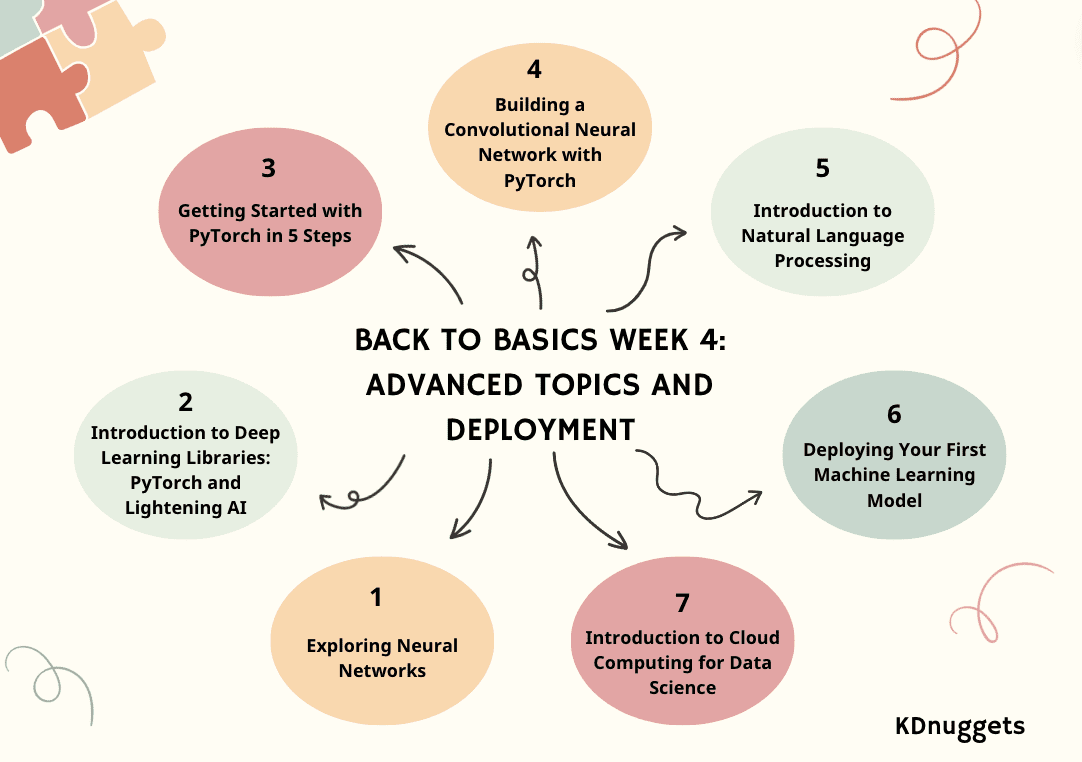

Image by Author

Join KDnuggets with our Back to Basics pathway to get you kickstarted with a new career or a brush up on your data science skills. The Back to Basics pathway is split up into 4 weeks with a bonus week. We hope you can use these blogs as a course guide.

If you haven’t already, have a look at:

Moving onto the third week, we will dive into advanced topics and deployment.

- Day 1: Exploring Neural Networks

- Day 2: Introduction to Deep Learning Libraries: PyTorch and Lightening AI

- Day 3: Getting Started with PyTorch in 5 Steps

- Day 4: Building a Convolutional Neural Network with PyTorch

- Day 5: Introduction to Natural Language Processing

- Day 6: Deploying Your First Machine Learning Model

- Day 7: Introduction to Cloud Computing for Data Science

Week 4 – Part 1: Exploring Neural Networks

Unlocking the power of AI: a guide to neural networks and their applications.

Imagine a machine thinking, learning, and adapting like the human brain and discovering hidden patterns within data.

This technology, Neural Networks (NN), algorithms are mimicking cognition. We’ll explore what NNs are and how they function later.

In this article, I’ll explain to you the Neural Networks (NN) fundamental aspects – structure, types, real-life applications, and key terms defining operation.

Week 4 – Part 2: Introduction to Deep Learning Libraries: PyTorch and Lightning AI

A simple explanation of PyTorch and Lightning AI.

Deep learning is a branch of the machine learning model based on neural networks. In the other machine model, the data processing to find the meaningful features is often done manually or relying on domain expertise; however, deep learning can mimic the human brain to discover the essential features, increasing the model performance.

There are many applications for deep learning models, including facial recognition, fraud detection, speech-to-text, text generation, and many more. Deep learning has become a standard approach in many advanced machine learning applications, and we have nothing to lose by learning about them.

To develop this deep learning model, there are various library frameworks we can rely upon rather than working from scratch. In this article, we will discuss two different libraries we can use to develop deep learning models: PyTorch and Lighting AI.

Week 4 – Part 3: Getting Started with PyTorch in 5 Steps

This tutorial provides an in-depth introduction to machine learning using PyTorch and its high-level wrapper, PyTorch Lightning. The article covers essential steps from installation to advanced topics, offering a hands-on approach to building and training neural networks, and emphasizing the benefits of using Lightning.

PyTorch is a popular open-source machine learning framework based on Python and optimized for GPU-accelerated computing. Originally developed by Meta AI in 2016 and now part of the Linux Foundation, PyTorch has quickly become one of the most widely used frameworks for deep learning research and applications.

PyTorch Lightning is a lightweight wrapper built on top of PyTorch that further simplifies the process of researcher workflow and model development. With Lightning, data scientists can focus more on designing models rather than boilerplate code.

Week 4 – Part 4: Building a Convolutional Neural Network with PyTorch

This blog post provides a tutorial on constructing a convolutional neural network for image classification in PyTorch, leveraging convolutional and pooling layers for feature extraction as well as fully connected layers for prediction.

A Convolutional Neural Network (CNN or ConvNet) is a deep learning algorithm specifically designed for tasks where object recognition is crucial – like image classification, detection, and segmentation. CNNs are able to achieve state-of-the-art accuracy on complex vision tasks, powering many real-life applications such as surveillance systems, warehouse management, and more.

As humans, we can easily recognize objects in images by analyzing patterns, shapes, and colors. CNNs can be trained to perform this recognition too, by learning which patterns are important for differentiation. For example, when trying to distinguish between a photo of a Cat versus a Dog, our brain focuses on unique shape, textures, and facial features. A CNN learns to pick up on these same types of distinguishing characteristics. Even for very fine-grained categorization tasks, CNNs are able to learn complex feature representations directly from pixels.

Week 4 – Part 5: Introduction to Natural Language Processing

An overview of Natural Language Processing (NLP) and its applications.

We’re learning a lot about ChatGPT and large language models (LLMs). Natural Language Processing has been an interesting topic, a topic that is currently taking the AI and tech world by storm. Yes, LLMs like ChatGPT have helped their growth, but wouldn’t it be good to understand where it all comes from? So let’s go back to the basics – NLP.

NLP is a subfield of artificial intelligence, and it is the ability of a computer to detect and understand human language, through speech and text just the way we humans can. NLP helps models process, understand and output the human language.

The goal of NLP is to bridge the communication gap between humans and computers. NLP models are typically trained on tasks such as next word prediction which allow them to build contextual dependencies and then be able to generate relevant outputs.

Week 4 – Part 6: Deploying Your First Machine Learning Model

With just 3 simple steps, you can build & deploy a glass classification model faster than you can say…glass classification model!

In this tutorial, we will learn how to build a simple multi-classification model using the Glass Classification dataset. Our goal is to develop and deploy a web application that can predict various types of glass, such as:

- Building Windows Float Processed

- Building Windows Non-Float Processed

- Vehicle Windows Float Processed

- Vehicle Windows Non Float Processed (missing in the dataset)

- Containers

- Tableware

- Headlamps

Moreover, we will learn about:

- Skops: Share your scikit-learn based models and put them in production.

- Gradio: ML web applications framework.

- HuggingFace Spaces: free machine learning model and application hosting platform.

By the end of this tutorial, you will have hands-on experience building, training, and deploying a basic machine learning model as a web application.

Week 4 – Part 7: Introduction to Cloud Computing for Data Science

And the Power Duo of Modern Tech.

In today’s world, two main forces have emerged as game-changers: Data Science and Cloud Computing.

Imagine a world where colossal amounts of data are generated every second. Well… you do not have to imagine… It is our world!

From social media interactions to financial transactions, from healthcare records to e-commerce preferences, data is everywhere.

But what’s the use of this data if we can’t get value? That’s exactly what Data Science does.

And where do we store, process, and analyze this data? That’s where Cloud Computing shines.

Let’s embark on a journey to understand the intertwined relationship between these two technological marvels. Let’s (try) to discover it all together!

Congratulations on completing week 4!!

The team at KDnuggets hope that the Back to Basics pathway has provided readers with a comprehensive and structured approach to mastering the fundamentals of data science.

Bonus week will be posted next week on Monday – stay tuned!

Nisha Arya is a Data Scientist and Freelance Technical Writer. She is particularly interested in providing Data Science career advice or tutorials and theory based knowledge around Data Science. She also wishes to explore the different ways Artificial Intelligence is/can benefit the longevity of human life. A keen learner, seeking to broaden her tech knowledge and writing skills, whilst helping guide others.