Beyond Human Blinders: How AI Is Rewriting Data Science’s Rules

AI and large models let data science move from curated samples to broad, auditable discovery—shifting bias, not eliminating it, and demanding new governance.

AI and large models are transforming data science by enabling far broader, less pre‑filtered data ingestion and automated discovery — but they do not eliminate bias; they shift where and how bias appears and make rigorous auditing and human oversight more essential than ever.

The promise: discovery without early human blinders

For decades, data science workflows began with human-curated filters: sampling rules, exclusion criteria, and feature selection that made problems tractable but also encoded assumptions about what “mattered.” Those early choices often removed signals before analysis began. Today, LLMs and foundation models can consume vastly larger, more heterogeneous datasets, surfacing correlations and hypotheses that pre-filters would have discarded. This expands the discovery space and accelerates exploratory science.

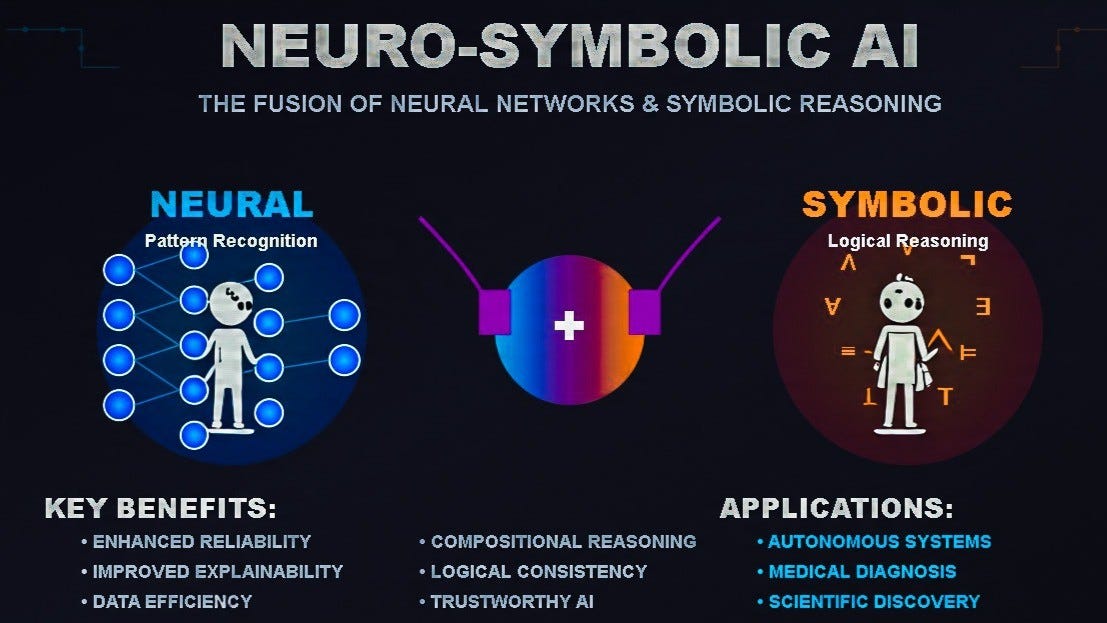

Interdependencies: AI, ML, and data science

- Data science frames questions, defines metrics, and validates outcomes.

- Machine learning provides algorithms that learn structure and generalize.

- AI (LLMs, foundation models) scales interpretation, feature extraction, and synthesis of unstructured sources.

Together they create a feedback loop: richer data enables stronger models; stronger models enable richer features; clearer questions from data scientists guide model selection.

Comparison table: human pre-filtering vs AI-driven ingestion

|

Attribute |

Human pre-filtering |

AI-driven broad ingestion |

|

Bias sources |

Selection and confirmation bias |

Training-data artifacts and label bias |

|

Scalability |

Limited by human effort |

High; handles massive unstructured data |

|

Transparency |

Easier to audit |

Often opaque; needs model audits |

|

Risk of missing signals |

High |

Lower if data available |

|

Best use case |

Small, well-understood domains |

Exploratory discovery and synthesis |

Why “objective” is misleading

Calling AI “objective” is dangerous shorthand. Models inherit the distributions and social patterns in their training data; they can amplify historical biases even as they remove human pre-filters. Recent research shows both promise and limits: targeted techniques like neuron pruning can reduce certain biases in LLMs, but context matters and one-size fixes rarely generalize. Knowledge‑graph augmentation and other training strategies can significantly reduce biased associations, but they require domain‑specific design and evaluation. Reviews of debiasing methods emphasize that no single mitigation approach eliminates all harms; layered approaches are necessary.

Practical guide: decision points and recommendations

Key considerations: data provenance, label quality, representativeness, regulatory constraints, and explainability.

Decision points: choose whether the goal is discovery (favour broad ingestion) or high‑stakes decisioning (favour curated, audited datasets). Clarifying questions teams should answer up front: What decisions will this support? Which groups could be harmed? What audit trails are required?

Recommendations:

- Combine broad ingestion for exploration with targeted human constraints for deployment.

- Build automated bias audits and counterfactual tests into pipelines.

- Use domain‑specific mitigation (e.g., pruning, knowledge‑graph augmentation) and measure fairness metrics continuously.

Risks and mitigations

Risk: model amplification of historical bias leading to unfair outcomes.

Mitigations: provenance checks, counterfactual evaluation, diverse test sets, and continuous monitoring; hold deployers accountable for context‑specific harms.

Closing

The future of data science is not human‑free analysis; it is human‑plus‑AI workflows. AI expands what we can see; humans must decide what we should act on, and build the governance to ensure those actions are fair, explainable, and aligned with societal values.

Written/published by Kevin Marshall with the help of AI models (AI Quantum Intelligence).

What's Your Reaction?

Like

0

Like

0

Dislike

0

Dislike

0

Love

0

Love

0

Funny

0

Funny

0

Angry

0

Angry

0

Sad

0

Sad

0

Wow

1

Wow

1