The Hidden Cost of Synthetic Drift: Why Models Quietly Degrade

Synthetic data can quietly erode model fidelity. Learn how synthetic drift accumulates and triggers model collapse and how organizations can detect early warning signals.

Introduction: The Silent Erosion No One Notices—Until It’s Too Late

AI systems rarely fail with a bang. They fail with a whisper.

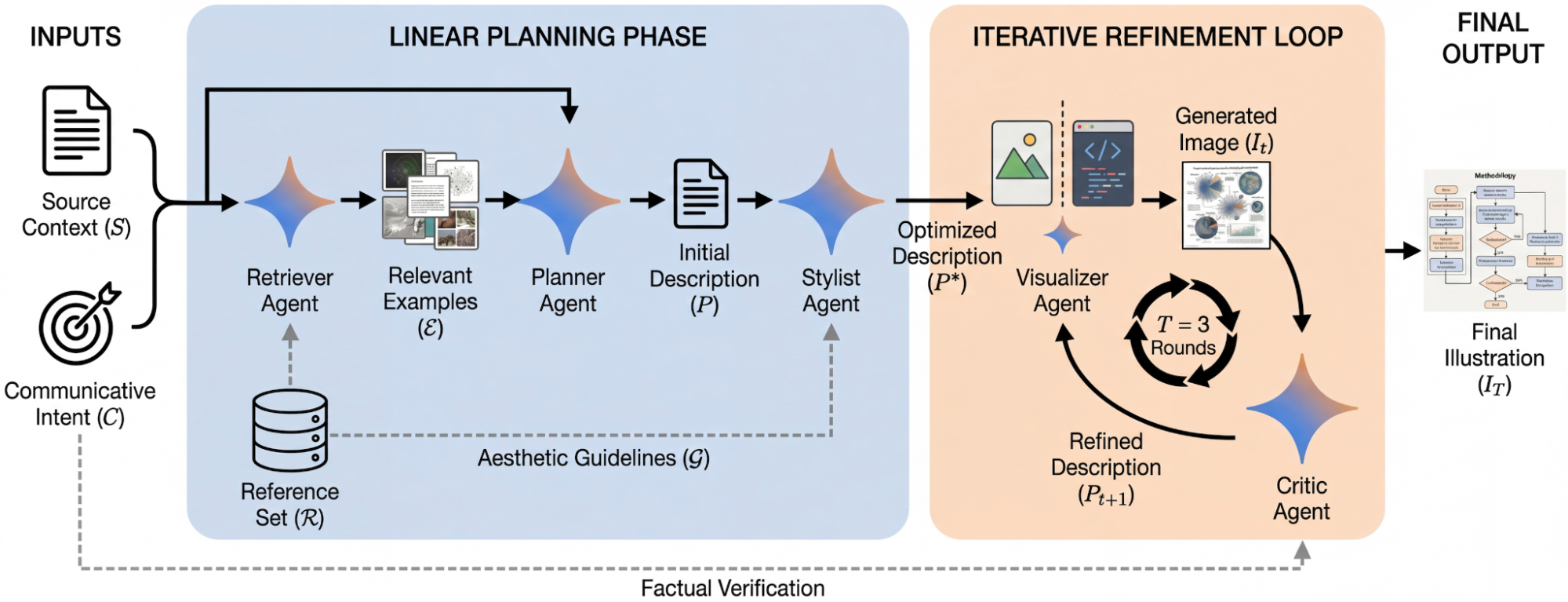

In the age of generative AI, organizations increasingly rely on synthetic data to accelerate development, reduce privacy risk, and fill gaps where real‑world data is scarce. But beneath the convenience lies a subtle, compounding threat: synthetic drift—the gradual, often invisible degradation of model quality caused by training on data generated by other models.

This drift doesn’t announce itself. Metrics look stable. Benchmarks hold. Dashboards stay green. Yet underneath, the model’s internal representation of reality is quietly warping.

Left unchecked, these imperceptible shifts accumulate into large‑scale model collapse, where systems lose diversity, accuracy, and grounding in the real world. And by the time symptoms appear, the damage is already deep.

This article breaks down why synthetic drift happens, how it compounds, and what early warning signals organizations must monitor to avoid catastrophic degradation.

1. Why Synthetic Drift Happens: The Physics of Imperfect Copies

1.1 Synthetic Data Is a Model of a Model

Synthetic data is not reality—it’s a statistical approximation of reality. As Humans in the Loop notes, synthetic datasets “replicate patterns [models] have already learned,” meaning they inherently lack the messy, nonlinear, chaotic edge cases that define real‑world environments.

Every synthetic sample carries the fingerprints of the model that generated it: its biases, its blind spots, its smoothing tendencies.

1.2 Imperceptible Errors Become Ground Truth

AutomationInside describes this as generative data drift—tiny statistical errors introduced by a model become amplified when subsequent models treat those errors as truth.

Think of it like photocopying a photocopy. Each generation looks “fine,” but fidelity quietly erodes.

1.3 The Feedback Loop Problem

When organizations train new models on synthetic data produced by earlier models, they create a closed‑loop system. Over time:

- Rare events disappear

- High‑frequency details blur

- Diversity collapses

- Biases compound

This iterative decay is well‑documented: each generation becomes slightly worse than the last, even if metrics appear stable.

2. How Small Shifts Accumulate Into Large‑Scale Collapse

2.1 Loss of Diversity and Mode Collapse

Synthetic data tends to smooth out extremes. Over generations, models lose the ability to represent rare or complex patterns. This leads to:

- Repetitive text

- Generic images

- Narrower output distributions

AutomationInside highlights this as a defining symptom of model collapse.

2.2 Increased Hallucinations

As the model’s grounding in real‑world distributions weakens, hallucinations rise. The system becomes confident but wrong—an especially dangerous failure mode for enterprise applications.

2.3 Drift Between Synthetic Reality and Actual Reality

Synthetic datasets often fail to capture the chaotic variability of real environments. When deployed, models encounter conditions they were never exposed to, causing sudden performance drops. Humans in the Loop identifies this as a primary cause of silent model failure.

2.4 Knowledge Forgetting

Repeated training on synthetic data causes models to “forget” previously learned information. This is the photocopier effect described in model‑decay research: each generation loses a bit more fidelity.

3. Why Organizations Miss the Warning Signs

3.1 Metrics Stay Green—Until They Don’t

Synthetic datasets often mimic the statistical structure of training data, so validation metrics appear stable. But these metrics measure similarity, not truth.

3.2 Benchmarks Don’t Capture Drift

Benchmarks are static. Drift is dynamic. A model can ace GLUE or SuperGLUE while quietly losing real‑world robustness.

3.3 Synthetic Data Masks Bias Amplification

Biases embedded in synthetic data compound over generations, but because the data is internally consistent, the bias is invisible until deployment.

4. Early Warning Signals: How to Detect Synthetic Drift Before It’s Too Late

Organizations need a multi‑layered detection strategy. Here are the most reliable early indicators.

4.1 Declining Output Diversity

One of the earliest signs of drift is a measurable reduction in:

- Vocabulary richness

- Structural variety

- Image texture complexity

- Behavioral variability in agents

This is often detectable before accuracy drops.

4.2 Subtle Benchmark Degradation

Even small declines in benchmark performance—especially on tasks previously mastered—signal that the model is losing representational fidelity.

4.3 Rising Hallucination Rates

Track hallucinations longitudinally. A slow uptick is often the first visible symptom of deeper collapse.

4.4 Divergence Between Synthetic and Real‑World Error Profiles

If your model performs well on synthetic validation sets but poorly on real‑world samples, you’re already in drift territory. Humans in the Loop identifies this mismatch as a hallmark of silent model failure.

4.5 Loss of Rare‑Event Competence

Monitor performance on:

- Edge cases

- Long‑tail distributions

- High‑variance scenarios

Synthetic data rarely captures these, so degradation here is a strong drift signal.

5. How Organizations Can Prevent Collapse

5.1 Maintain a Real‑World Data Anchor

Never allow synthetic data to exceed a certain percentage of your training corpus. Real‑world data must remain the grounding force.

5.2 Use Human‑in‑the‑Loop Validation

HITL workflows catch the gaps synthetic data cannot. They are essential for detecting drift early.

5.3 Implement Drift‑Aware Training Pipelines

This includes:

- Cross‑generation consistency checks

- Diversity‑preserving regularization

- Real‑world shadow evaluations

5.4 Avoid Closed‑Loop Training

Never train a model exclusively on data generated by its predecessors. Break the loop with external data injections.

5.5 Monitor Longitudinal Metrics, Not Snapshots

Drift is a trend, not an event. Track:

- Diversity over time

- Hallucination rates

- Real‑world error divergence

- Benchmark decay curves

Conclusion: The Future Belongs to Organizations That Treat Synthetic Data With Respect

Synthetic data is powerful—but it is not neutral.

Used carelessly, it becomes a slow‑acting toxin that erodes model fidelity from the inside out. Used wisely, with guardrails and real‑world anchors, it becomes a force multiplier.

The organizations that thrive in the next decade will be those that understand the hidden cost of synthetic drift and build systems that detect, mitigate, and counteract it long before collapse sets in.

Your models won’t fail loudly. They’ll fail quietly.

The question is whether you’ll hear the warning signs in time.

References:

3 Ways Synthetic Data Breaks Models and How Human Validators Fix Them | Humans in the Loop

https://www.automationinside.com/content/ai-model-collapse-synthetic-training

Preventing Model Collapse with Synthetic Data

Written/published by AI Quantum Intelligence with the help of AI models.

What's Your Reaction?

Like

0

Like

0

Dislike

0

Dislike

0

Love

0

Love

0

Funny

0

Funny

0

Angry

0

Angry

0

Sad

0

Sad

0

Wow

0

Wow

0