AI Cybersecurity’s False Sense of Supremacy: Why the Real Advantage Still Belongs to Humans Who Know How to Use Machines

AI is accelerating attackers as fast as defenders. True cyber resilience comes from human creativity augmented by machine speed—not AI alone.

For years, the cybersecurity industry has sold a seductive promise: that AI-enabled defense systems will finally tip the balance in favour of defenders. Faster detection. Automated response. Predictive analytics. A future where algorithms outpace adversaries and digital infrastructure becomes self‑healing.

It’s a compelling narrative. It’s also incomplete.

The truth is far more uncomfortable: AI has accelerated attackers at least as much as it has empowered defenders—and in some domains, more. The result is not a decisive advantage but a rapidly escalating arms race where neither side holds stable ground.

The question organizations must confront is no longer “Should we adopt AI for cybersecurity?” but rather: “Are we building defenses that actually outpace the threats—or simply buying tools that make us feel safer?”

The Illusion of Progress: Why AI Isn’t Winning the Cyber War

There’s no denying that AI has improved defensive capabilities. Modern SOCs can detect anomalies in seconds, correlate signals across millions of endpoints, and automate containment actions that once required human intervention.

But attackers have gained something even more dangerous: creativity at scale.

AI now enables:

-

Malware that mutates on every execution

-

Automated reconnaissance that maps entire cloud estates in minutes

-

Hyper-personalized phishing indistinguishable from human communication

-

Exploit development accelerated by code‑generation models

The barrier to entry for sophisticated attacks has collapsed. What once required nation‑state resources can now be executed by small, well‑funded groups—or even individuals with the right tooling.

Defenders face a brutal asymmetry: They must be perfect. Attackers only need to be lucky.

The Myth of the “Upper Hand”

Vendors love to claim that AI gives defenders the upper hand. But the reality is more nuanced.

Where AI helps defenders

-

Speed

-

Scale

-

Exhaustive monitoring

-

Automated response

Where AI helps attackers

-

Novelty

-

Variability

-

Unpredictability

-

Rapid iteration

Defensive AI is inherently conservative—it must avoid false positives, maintain uptime, and operate within governance constraints. Offensive AI has no such limitations. It can be reckless, experimental, and iterative.

This is why the “upper hand” narrative collapses under scrutiny. AI is not a shield. It is an accelerant—for both sides.

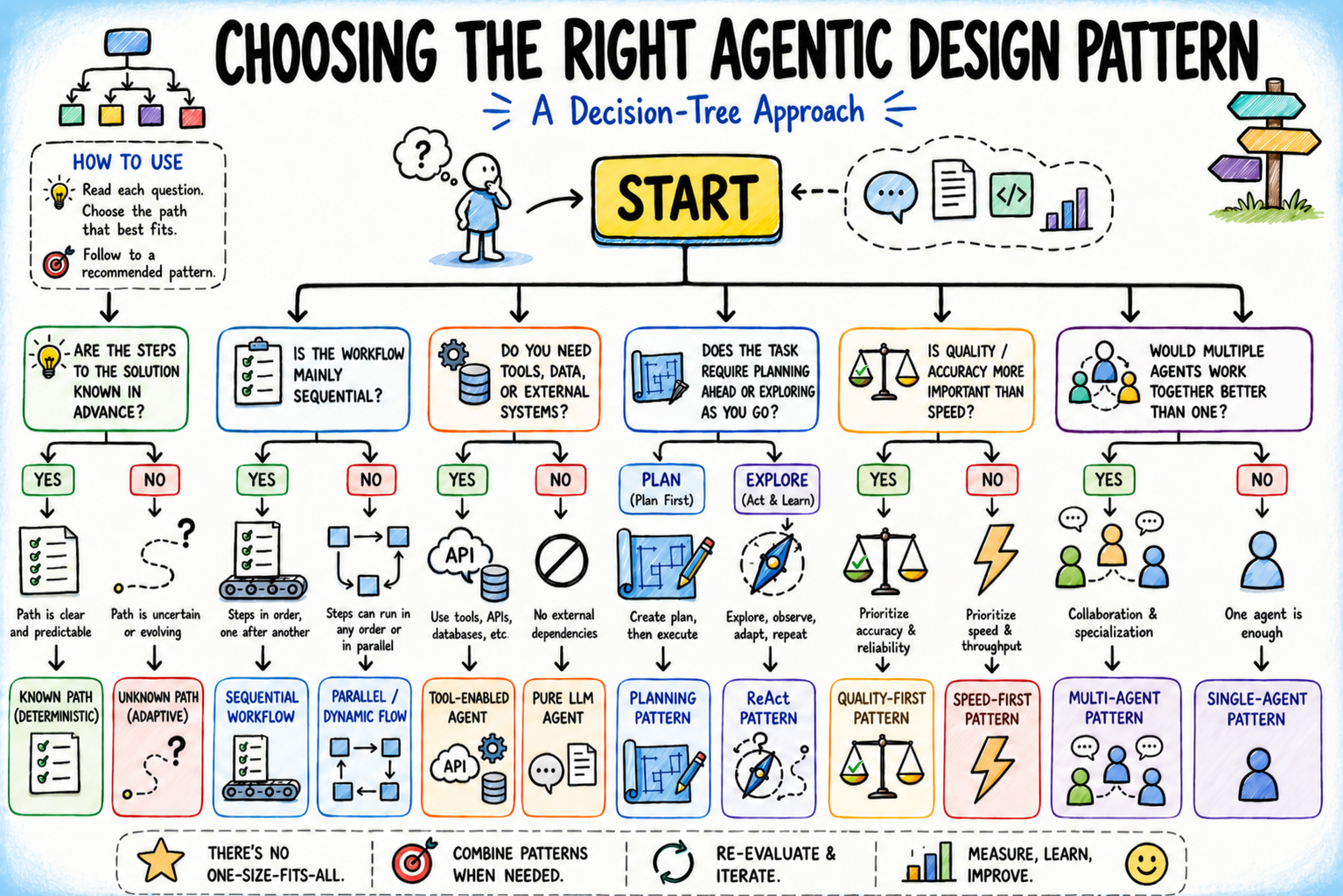

The Only Sustainable Advantage: Human Creativity Augmented by Machine Speed

If AI alone cannot secure the future, what can?

A hybrid model where human adversarial thinking is fused with AI’s computational power.

Humans bring:

-

Creativity

-

Contextual judgment

-

Lateral thinking

-

Understanding of business impact

AI brings:

-

Pattern recognition

-

Real-time correlation

-

Instantaneous response

-

Scalability

This is the model used by the most advanced cyber defense organizations on the planet. Not AI replacing humans. Not humans resisting automation. But humans who know how to weaponize AI defensively.

The future of cybersecurity is not artificial intelligence. It is augmented intelligence.

Should Organizations Keep Spending on AI Cyber Tools? Yes—but Not Blindly

If attackers’ AI is equal to or better than defenders’, does it still make sense to invest in AI-enabled cybersecurity?

Absolutely—but only with strategic clarity.

AI is essential because:

-

Attack volume is too high for humans alone

-

Identity attacks move too fast for manual response

-

Regulators increasingly expect AI-assisted monitoring

-

AI is now baseline infrastructure, not a luxury

But AI investment only pays off when paired with:

-

Skilled analysts

-

Strong identity governance

-

Zero-trust architecture

-

Continuous red teaming

-

Mature incident response

Buying AI tools without the human layer is like buying a fighter jet without a pilot.

The Real Spending Problem: Misallocation, Not Underinvestment

Most organizations overspend on:

-

“Next-gen” dashboards

-

Automated tools with vague promises

-

Vendor hype cycles

And underspend on:

-

Threat hunters

-

Architecture hardening

-

Identity lifecycle management

-

Red team simulations

-

Incident readiness

AI amplifies whatever foundation exists. If the foundation is weak, AI amplifies the weakness.

The Hard Truth: AI Will Not Save Us—But Humans Who Use AI Well Might

The cybersecurity industry must abandon the fantasy that AI alone will secure the digital world. Attackers innovate too quickly. Models are too predictable. And the threat landscape is too dynamic for static automation.

The real advantage belongs to organizations that understand a simple principle:

AI gives defenders speed. Humans give defenders unpredictability. Together, they create resilience.

The future of cybersecurity will not be won by machines or by humans—but by the teams that know how to combine the two into something neither could achieve alone.

Written/developed by AI Quantum Intelligence with the help of AI models.

What's Your Reaction?

Like

0

Like

0

Dislike

0

Dislike

0

Love

1

Love

1

Funny

0

Funny

0

Angry

1

Angry

1

Sad

1

Sad

1

Wow

1

Wow

1