Regulating the Unregulatable: Why AI Governance Is Already Behind

AI governance is already lagging behind frontier model evolution. Explore why regulation built for static systems cannot keep pace with dynamic, rapidly advancing AI.

Frontier AI is evolving faster than any regulatory framework on the planet, creating a widening gap between what governments think they’re governing and what frontier labs are actually building. This article examines why the regulatory paradigm is already outdated—and what a realistic governance model must look like in an era where AI capabilities shift faster than legislation can be drafted.

1. The Core Problem: Regulation Assumes a World That No Longer Exists

Most global AI governance efforts — from the EU AI Act to U.S. executive frameworks — were built on a foundational assumption: pretraining scale is the primary driver of frontier model capability. That assumption is now breaking.

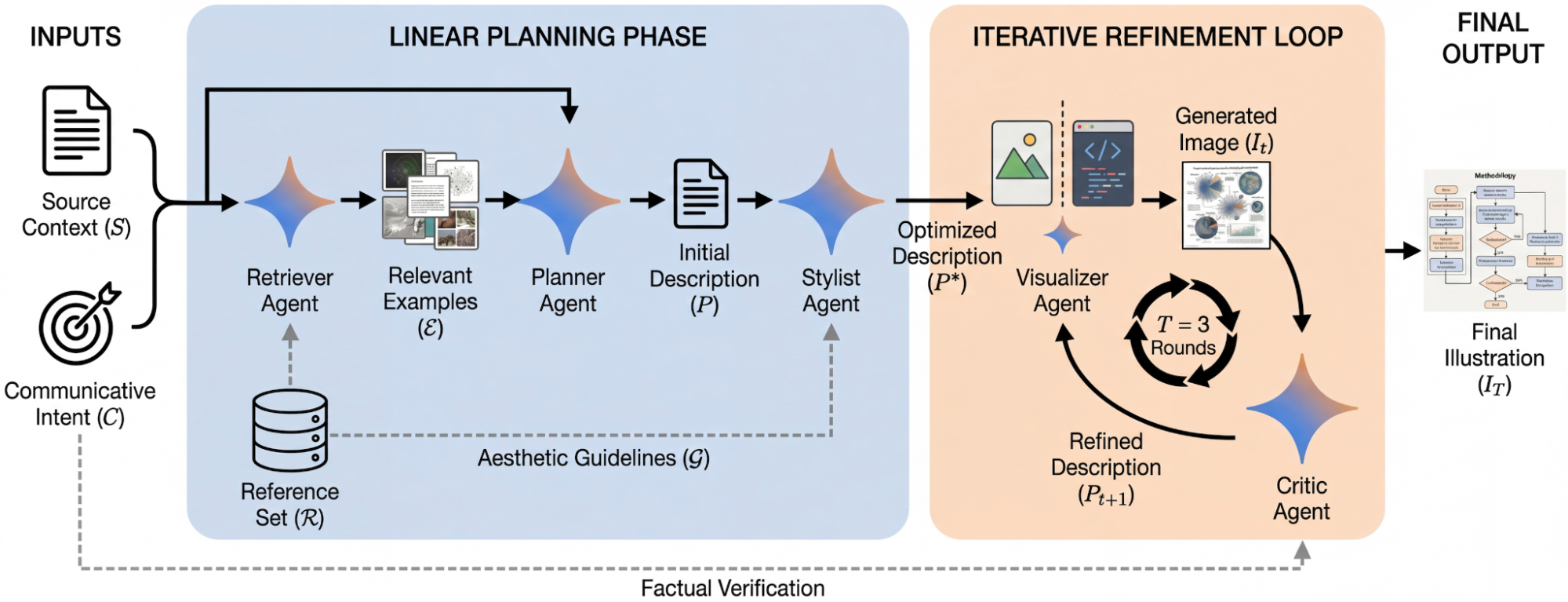

Recent research shows that jurisdictions worldwide are preparing laws that still target pretraining scale as the key bottleneck for oversight, even as frontier labs pivot toward alternative capability pathways such as inference‑time reasoning (throwing more "compute" at responses) and post‑training optimization.

This mismatch means regulators are building guardrails for a road the industry is no longer driving on.

2. The Frontier Has Shifted — and Regulation Hasn’t

Frontier AI models increasingly exhibit the following:

- Unexpected emergent capabilities

- Rapid post‑deployment evolution

- Reasoning improvements that don’t require massive new training runs

- Proliferation risks once models are released

OpenAI’s analysis highlights that dangerous capabilities can arise unpredictably, are difficult to contain once deployed, and can proliferate widely—making traditional regulatory levers (licensing, training‑run thresholds, compliance audits) insufficient on their own.

In other words: regulators are trying to govern static systems, but frontier AI is dynamic, self‑improving, and increasingly modular.

3. Why Traditional Governance Models Fail

3.1 Slow Legislative Cycles vs. Fast Capability Cycles

Governments legislate in multi‑year cycles. Frontier AI evolves in multi-month—or multi-week—cycles. This temporal mismatch guarantees persistent regulatory lag.

3.2 Over‑reliance on Pretraining Metrics

Most governance frameworks still assume that:

- Bigger models = more risk

- Compute thresholds = meaningful oversight points

But as research shows, the “pretraining frontier” is no longer the sole determinant of capability. New paradigms diffuse risk across the entire lifecycle, making scale‑based regulation increasingly obsolete.

3.3 Fragmented Governance Ecosystems

A multi‑scale analysis of national AI governance systems (e.g., Canada’s) reveals structural fragmentation: inconsistent norms, unclear actor roles, and uneven resource access. This fragmentation becomes catastrophic when applied to frontier AI, where coordination is essential.

4. The Real Risk: A False Sense of Control

The most dangerous outcome is not underregulation—it's illusory regulation.

When governments believe they have effective oversight, but the underlying technical assumptions are wrong, society becomes more vulnerable. We risk:

- Overestimating our ability to prevent catastrophic misuse

- Underestimating the speed of capability jumps

- Misallocating regulatory resources

- Allowing frontier labs to outpace oversight by orders of magnitude

5. What Effective Governance Must Look Like Now

Based on emerging research and frontier‑lab proposals, a viable governance model must include the following:

5.1 Continuous, Not Static, Oversight

Regulation must shift from one-time compliance to ongoing monitoring, including:

- Post‑deployment capability tracking

- Real‑time risk assessments

- Mandatory reporting of emergent behaviours

5.2 Transparency as a Primary Control Mechanism

As suggested by recent governance research, transparency — not scale — becomes the new bottleneck. This includes:

- Disclosure of training data sources

- Model evaluation results

- Safety‑critical incidents

- Third‑party audits

5.3 Governance Across the Entire Lifecycle

From dataset construction to inference-time behaviour, oversight must cover:

- Pretraining

- Fine‑tuning

- Reinforcement learning

- Tool‑use integration

- Deployment environments

- Post‑deployment evolution

5.4 International Coordination

Frontier AI is global; governance must be too. Fragmented national frameworks cannot meaningfully constrain transnational frontier labs.

6. The Hard Truth: AI Governance Will Always Lag — But It Doesn’t Have to Fail

Regulators will never move as fast as frontier AI. But they can build systems that adapt, update, and respond dynamically.

The future of AI governance is not about controlling every capability. It’s about building adaptive, transparent, continuously updated oversight systems that evolve alongside the models they regulate.

If we fail to make this shift, we won’t just be behind — we’ll be governing a world that no longer exists.

Written/published by Kevin Marshall with the help of AI models (AI Quantum Intelligence).

What's Your Reaction?

Like

0

Like

0

Dislike

0

Dislike

0

Love

0

Love

0

Funny

0

Funny

0

Angry

0

Angry

0

Sad

0

Sad

0

Wow

0

Wow

0