Finger-shaped sensor enables more dexterous robots

MIT engineers develop a long, curved touch sensor that could enable a robot to grasp and manipulate objects in multiple ways.

MIT researchers have developed a camera-based touch sensor that is long, curved, and shaped like a human finger. Their device, which provides high-resolution tactile sensing over a large area, could enable a robotic hand to perform multiple types of grasps. Image: Courtesy of the researchers

By Adam Zewe | MIT News

Imagine grasping a heavy object, like a pipe wrench, with one hand. You would likely grab the wrench using your entire fingers, not just your fingertips. Sensory receptors in your skin, which run along the entire length of each finger, would send information to your brain about the tool you are grasping.

In a robotic hand, tactile sensors that use cameras to obtain information about grasped objects are small and flat, so they are often located in the fingertips. These robots, in turn, use only their fingertips to grasp objects, typically with a pinching motion. This limits the manipulation tasks they can perform.

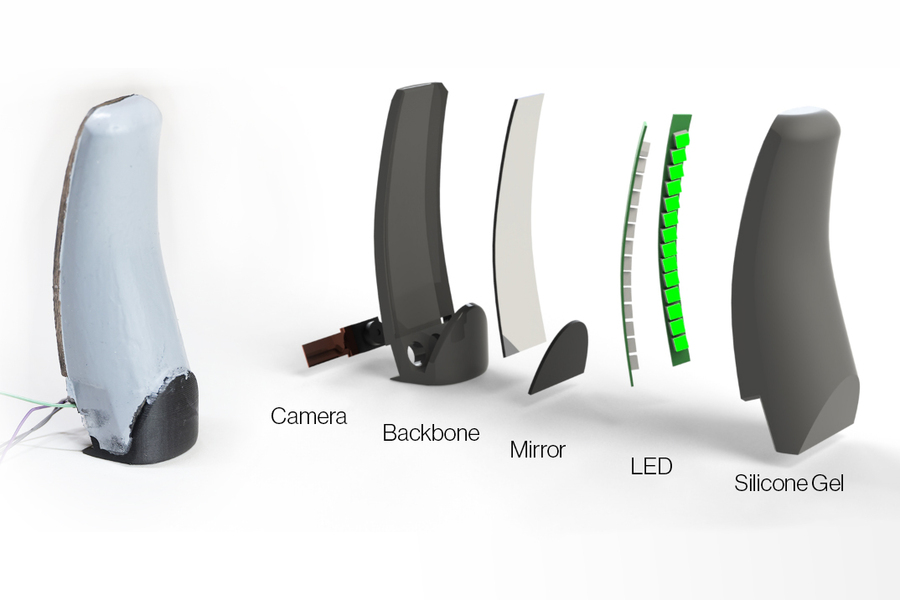

MIT researchers have developed a camera-based touch sensor that is long, curved, and shaped like a human finger. Their device provides high-resolution tactile sensing over a large area. The sensor, called the GelSight Svelte, uses two mirrors to reflect and refract light so that one camera, located in the base of the sensor, can see along the entire finger’s length.

In addition, the researchers built the finger-shaped sensor with a flexible backbone. By measuring how the backbone bends when the finger touches an object, they can estimate the force being placed on the sensor.

They used GelSight Svelte sensors to produce a robotic hand that was able to grasp a heavy object like a human would, using the entire sensing area of all three of its fingers. The hand could also perform the same pinch grasps common to traditional robotic grippers.

This gif shows a robotic hand that incorporates three, finger-shaped GelSight Svelte sensors. The sensors, which provide high-resolution tactile sensing over a large area, enable the hand to perform multiple grasps, including pinch grasps that use only the fingertips and a power grasp that uses the entire sensing area of all three fingers. Credit: Courtesy of the researchers

“Because our new sensor is human finger-shaped, we can use it to do different types of grasps for different tasks, instead of using pinch grasps for everything. There’s only so much you can do with a parallel jaw gripper. Our sensor really opens up some new possibilities on different manipulation tasks we could do with robots,” says Alan (Jialiang) Zhao, a mechanical engineering graduate student and lead author of a paper on GelSight Svelte.

Zhao wrote the paper with senior author Edward Adelson, the John and Dorothy Wilson Professor of Vision Science in the Department of Brain and Cognitive Sciences and a member of the Computer Science and Artificial Intelligence Laboratory (CSAIL). The research will be presented at the IEEE Conference on Intelligent Robots and Systems.

Mirror mirror

Cameras used in tactile sensors are limited by their size, the focal distance of their lenses, and their viewing angles. Therefore, these tactile sensors tend to be small and flat, which confines them to a robot’s fingertips.

With a longer sensing area, one that more closely resembles a human finger, the camera would need to sit farther from the sensing surface to see the entire area. This is particularly challenging due to size and shape restrictions of a robotic gripper.

Zhao and Adelson solved this problem using two mirrors that reflect and refract light toward a single camera located at the base of the finger.

GelSight Svelte incorporates one flat, angled mirror that sits across from the camera and one long, curved mirror that sits along the back of the sensor. These mirrors redistribute light rays from the camera in such a way that the camera can see the along the entire finger’s length.

To optimize the shape, angle, and curvature of the mirrors, the researchers designed software to simulate reflection and refraction of light.

“With this software, we can easily play around with where the mirrors are located and how they are curved to get a sense of how well the image will look after we actually make the sensor,” Zhao explains.

The mirrors, camera, and two sets of LEDs for illumination are attached to a plastic backbone and encased in a flexible skin made from silicone gel. The camera views the back of the skin from the inside; based on the deformation, it can see where contact occurs and measure the geometry of the object’s contact surface.

A breakdown of the components that make up the finger-like touch sensor. Image: Courtesy of the researchers

In addition, the red and green LED arrays give a sense of how deeply the gel is being pressed down when an object is grasped, due to the saturation of color at different locations on the sensor.

The researchers can use this color saturation information to reconstruct a 3D depth image of the object being grasped.

The sensor’s plastic backbone enables it to determine proprioceptive information, such as the twisting torques applied to the finger. The backbone bends and flexes when an object is grasped. The researchers use machine learning to estimate how much force is being applied to the sensor, based on these backbone deformations.

However, combining these elements into a working sensor was no easy task, Zhao says.

“Making sure you have the correct curvature for the mirror to match what we have in simulation is pretty challenging. Plus, I realized there are some kinds of superglue that inhibit the curing of silicon. It took a lot of experiments to make a sensor that actually works,” he adds.

Versatile grasping

Once they had perfected the design, the researchers tested the GelSight Svelte by pressing objects, like a screw, to different locations on the sensor to check image clarity and see how well it could determine the shape of the object.

They also used three sensors to build a GelSight Svelte hand that can perform multiple grasps, including a pinch grasp, lateral pinch grasp, and a power grasp that uses the entire sensing area of the three fingers. Most robotic hands, which are shaped like parallel jaw drippers, can only perform pinch grasps.

A three-finger power grasp enables a robotic hand to hold a heavier object more stably. However, pinch grasps are still useful when an object is very small. Being able to perform both types of grasps with one hand would give a robot more versatility, he says.

Moving forward, the researchers plan to enhance the GelSight Svelte so the sensor is articulated and can bend at the joints, more like a human finger.

“Optical-tactile finger sensors allow robots to use inexpensive cameras to collect high-resolution images of surface contact, and by observing the deformation of a flexible surface the robot estimates the contact shape and forces applied. This work represents an advancement on the GelSight finger design, with improvements in full-finger coverage and the ability to approximate bending deflection torques using image differences and machine learning,” says Monroe Kennedy III, assistant professor of mechanical engineering at Stanford University, who was not involved with this research. “Improving a robot’s sense of touch to approach human ability is a necessity and perhaps the catalyst problem for developing robots capable of working on complex, dexterous tasks.”

This research is supported, in part, by the Toyota Research Institute.