The Emperor’s New Code: Why AI Gurus Want You Confused (And How to Take Back Control)

Stop letting AI gurus overcomplicate things. Learn the difference between Black Box vs. Glass Box AI and Training vs. inference—and start making smarter deployment decisions.

If you have sat through a strategy meeting recently, you have likely experienced a specific kind of intellectual fog.

It usually sounds like this: “We need to leverage a multi-modal, closed-loop, deep neural network architecture with a stochastic parrot overlay to drive synergistic value creation.”

The speaker—usually a self-proclaimed “AI Guru” or a consultant trying to bill by the hour—walks out to applause. Meanwhile, the decision-makers in the room are left with a headache and a lingering sense of inadequacy.

Here is the secret they don’t want you to know: If you cannot explain how the AI helps you make a decision in plain English, you do not understand it. And if you do not understand it, you should not deploy it.

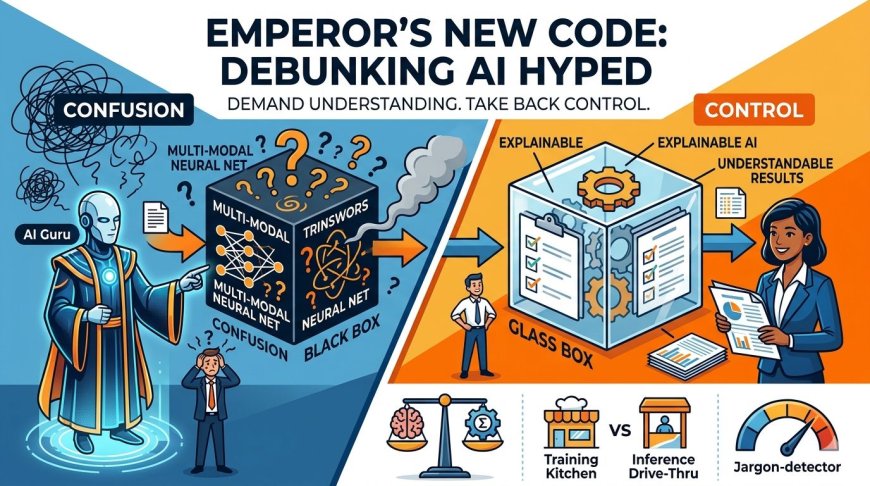

Let’s strip away the buzzwords. We are going to look at two concepts that are constantly overcomplicated: The Black Box Problem and Training vs. Inference. By the end of this, you won’t be an AI engineer—but you will be qualified to stop wasting money on solutions that sound fancy but don’t work.

1. The "Black Box" Myth: It’s Either Magic or Math

The term "Black Box" is used by experts to sound mysterious and by vendors to hide flaws. In reality, understanding your AI’s "box" is the difference between a tool and a liability.

The Simplification

Imagine you hire a new employee.

- The Black Box AI is a genius you hire who sits in a room, never speaks to anyone, and spits out answers. When you ask, “Why did you approve that $100,000 loan?” they shout back, “Because the algorithm said so.” Would you keep that employee? No. You’d fire them immediately. Black Box AI is an employee who cannot explain their resume.

- The Glass Box (or Explainable AI) is a diligent analyst. They look at the data, apply the company policy, and present a report: *“I approved the loan because the client’s revenue increased 20% for three years straight, and their debt-to-income ratio is below 15%. Here are the sources.”*

How to Move Forward

When a guru tries to sell you a "proprietary, complex neural network" (Black Box), ask one question: “If this makes a mistake, how do I audit it?”

If they cannot answer that, walk away. For 99% of business problems (inventory management, customer churn, fraud detection), you do not need a Black Box. You need a Glass Box. You need to know why so you can trust the what.

2. Training vs. Inference: The Kitchen Analogy

This is the most common area where strategy gurus inflate costs and timelines. They mix up the cost of building the kitchen with the cost of cooking the meal. If you confuse these two, you will either overspend on infrastructure or underestimate your operational costs.

The Simplification

Training (The Cooking School)

Training is where the AI learns. You take massive amounts of data (ingredients), throw them into a powerful computer (the kitchen), and let the machine find patterns (the recipe).

- The Guru’s Version: “We need to spin up a cluster of H100 GPUs with distributed computing architecture to fine-tune the latent space.”

- The Reality: “We are going to teach the AI how to do the job. This takes a lot of electricity and time, but we only do it when we launch or when the market changes significantly.”

Decision Maker Takeaway: Training is expensive, but it is a capital expense (CapEx) or a one-time project cost. Do not let them sell you a "training solution" for daily operations unless you are OpenAI.

Inference (The Drive-Thru)

Inference is when the AI works. You feed it a new piece of data (a customer asking a question, a new invoice, a sensor reading) and it spits out an answer using what it learned during training.

- The Guru’s Version: “We are executing real-time token generation via the API endpoint with low-latency response validation.”

- The Reality: “We are asking the trained AI a question. This costs pennies and takes half a second.”

Decision Maker Takeaway: Inference is where you make money. This is the operational expense (OpEx). If a vendor tries to charge you “training-level” prices for every single transaction, they are either incompetent or hoping you don’t know the difference.

The Strategy: How to Spot the Jargon Trap

Now that you understand these two concepts, you have a superpower: Jargon Detection.

When a consultant or an "AI expert" starts throwing out complicated terms to justify a massive budget or a six-month timeline, use this cheat sheet to pull them back to reality.

|

The Jargon |

The Translation |

Your Question |

|

"Proprietary Black Box Model" |

"We don’t know how it works either, good luck." |

“Who is liable when it gets it wrong?” |

|

"High-Dimensional Latent Space" |

"The computer is thinking about patterns." |

“Okay. But does it follow our business logic?” |

|

"Expensive Training Clusters" |

"We are building the kitchen." |

“Is this a one-time build, or are we paying this rate forever?” |

|

"Real-Time Inference" |

"The AI is answering the question." |

“What is the cost per transaction?” |

Conclusion: Simplicity is the Strategy

The AI industry is currently in a phase where complexity is mistaken for sophistication. But for decision makers, complexity is the enemy of execution.

- Demand a Glass Box: If the AI can’t explain its decisions, it’s a legal risk, not a business asset.

- Separate the Kitchen from the Meal: Don’t pay restaurant construction prices for a cheeseburger. Know the difference between the cost to build (train) the AI and the cost to use (inference) the AI.

The companies that win in the next five years won’t be the ones with the most complex algorithms. They will be the ones who ask the simplest questions: “How does this help me make a decision?” and “How much does it actually cost to run?”

Ignore the gurus. Deploy the logic.

Written/published by Kevin Marshall with the help of AI models (AI Quantum Intelligence).

What's Your Reaction?

Like

1

Like

1

Dislike

0

Dislike

0

Love

0

Love

0

Funny

1

Funny

1

Angry

0

Angry

0

Sad

0

Sad

0

Wow

0

Wow

0