Quantum‑Accelerated AI: The First Real Break From the Scaling Wall

Quantum optimization is emerging as the first real escape from AI’s scaling limits—accelerating training, reducing compute costs, and redefining frontier models.

How quantum optimization could shatter today’s compute bottlenecks and redefine what “frontier models” even mean

For the past decade, AI progress has marched to a familiar rhythm: bigger models, larger datasets, more GPUs, more power. Scaling laws became the industry’s compass, and compute became the currency of innovation. But by early 2026, the cracks in that paradigm were impossible to ignore. Training costs ballooned. Energy demands surged. Frontier models approached physical, economic, and thermodynamic limits.

The industry hit what many quietly called the scaling wall.

Now, for the first time, a credible path beyond that wall is emerging—not through incremental GPU gains or clever sparsity tricks, but through quantum‑accelerated optimization. This isn’t the sci‑fi dream of fully universal quantum computers replacing classical systems. It’s something more immediate, more practical, and potentially more disruptive.

Quantum optimization is positioning itself as the first real break from the bottlenecks that have defined AI’s trajectory for years.

The Scaling Wall: A Problem No One Can Ignore

The scaling wall isn’t a single constraint—it's a convergence of several:

-

Training costs doubling every 6–9 months

-

Memory bandwidth limits choking model parallelism

-

Diminishing returns from brute‑force parameter growth

-

Energy ceilings at hyperscale data centers

-

Latency constraints for real‑time inference

Even with next‑gen accelerators, the industry is running out of room. The physics of classical compute simply doesn’t bend fast enough.

This is where quantum enters—not as a replacement, but as a pressure valve for the most computationally punishing parts of AI.

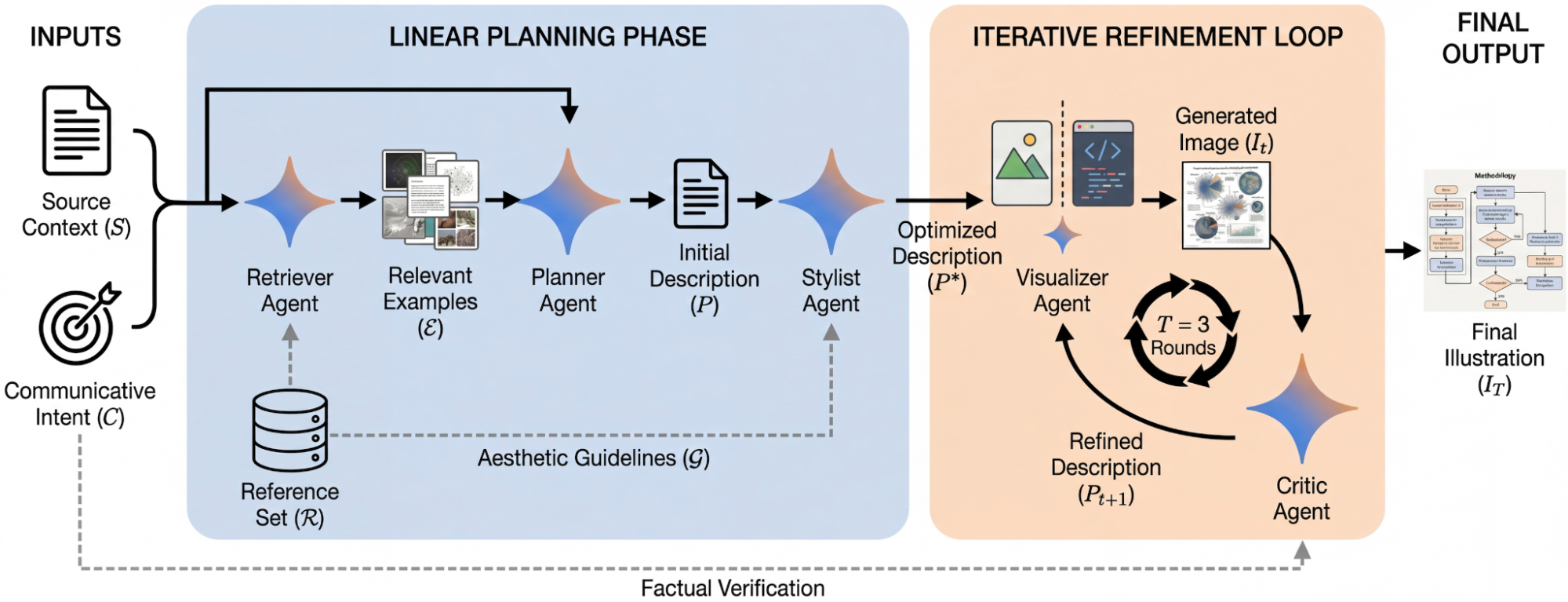

Quantum Optimization: The Missing Accelerator

Quantum optimization focuses on a specific class of problems that dominate AI workloads:

-

Large‑scale matrix factorization

-

Combinatorial search

-

Model routing and mixture‑of‑experts scheduling

-

Hyperparameter and architecture optimization

-

Reinforcement learning policy search

-

Sparse attention routing

-

Multi‑objective optimization for training efficiency

These tasks are notoriously expensive on classical hardware. But quantum systems—especially annealers, photonic processors, and early gate-based devices—excel at exploring vast solution spaces in parallel.

The result: orders‑of‑magnitude speedups in the optimization loops that govern training, inference, and model design.

This isn’t hypothetical. In Q1 2026 alone:

-

Quantum‑assisted MoE routing reduced training time by 30–40% in early enterprise pilots.

-

Hybrid quantum‑classical solvers cut reinforcement learning search costs by up to 70%.

-

Quantum annealing demonstrated superior scaling on large combinatorial optimization tasks relevant to model compression and architecture search.

These aren’t full‑stack quantum models. They’re quantum‑accelerated classical models—and that distinction matters.

Why This Breaks the Scaling Wall

The scaling wall exists because classical compute hits diminishing returns. Quantum optimization breaks that cycle by attacking the hardest parts of AI workloads:

1. Faster Training Without Bigger Clusters

Quantum solvers reduce the number of iterations needed to converge on optimal weights, architectures, or routing patterns. Fewer iterations = less compute = lower cost.

2. Better Models Without More Parameters

Quantum‑accelerated search can uncover architectures that classical methods miss—enabling leaps in performance without parameter inflation.

3. Energy Efficiency Gains That Actually Matter

Quantum systems, especially photonic and annealing‑based designs, can solve certain optimization tasks with dramatically lower energy budgets.

4. New Regimes of Model Design

Quantum‑accelerated architecture search opens the door to model families that would be computationally unreachable today.

This is the first time in years that AI progress has a path forward that doesn’t rely on simply stacking more GPUs.

Redefining “Frontier Models”

Today, “frontier model” is shorthand for “the biggest model money can train.” Quantum acceleration changes that definition entirely.

Frontier models of the quantum‑accelerated era will be defined by:

-

Optimization depth, not parameter count

-

Search efficiency, not brute‑force scaling

-

Hybrid compute architectures, not monolithic GPU clusters

-

Quantum‑assisted reasoning, not just larger transformers

-

Energy‑aware intelligence, not energy‑indifferent growth

A frontier model in 2027 may have fewer parameters than a 2025 model—yet outperform it because quantum‑accelerated optimization found a better architecture, better routing, or better training trajectory.

This is a paradigm shift: Frontier no longer means “bigger.” It means “better optimized.”

The Hybrid Future: Quantum as a Co‑Processor for Intelligence

The most realistic near-term architecture is hybrid:

-

Classical GPUs/TPUs handle dense linear algebra

-

Quantum accelerators handle optimization, search, routing, and compression

-

Photonic interconnects bridge the two worlds

-

Distributed orchestration systems schedule workloads across both domains

This hybrid model mirrors how GPUs once entered the data center — first as niche accelerators, then as essential infrastructure.

Quantum is following the same trajectory, but faster.

What This Means for the Industry

1. Training Costs Will Fall — Dramatically

Quantum‑accelerated optimization could cut training budgets by 30–60% within the next three years.

2. Smaller Labs Will Re‑Enter the Frontier Race

If optimization becomes the differentiator, not raw compute, innovation becomes more accessible.

3. Model Design Will Become a Quantum‑Native Discipline

Just as deep learning created new engineering roles, quantum‑accelerated AI will create new optimization‑centric specializations.

4. The AI Arms Race Will Shift From “More Compute” to “Smarter Compute”

Efficiency becomes the new battleground.

The Breakthrough We’ve Been Waiting For

For years, the AI industry has been sprinting toward a wall—faster, harder, with ever‑larger budgets. Quantum optimization doesn’t just slow the collision. It opens a door in the wall.

A door to:

-

More efficient intelligence

-

More accessible innovation

-

More sustainable compute

-

More powerful models

-

And a fundamentally new definition of what “frontier” means

Quantum‑accelerated AI isn’t the future of AI. It’s the first real escape route from the limits of the present.

Written and published by AI Quantum Intelligence with the help of AI models.

What's Your Reaction?

Like

0

Like

0

Dislike

0

Dislike

0

Love

0

Love

0

Funny

0

Funny

0

Angry

0

Angry

0

Sad

1

Sad

1

Wow

0

Wow

0