Guardrails, Not Roadblocks: The Adaptive AI Integrity Framework for Generative and Agentic Governance

Implementing the Adaptive AI Integrity Framework (AAIF): A pragmatic, scalable governance model to balance speed and safety when deploying generative and agentic AI.

This concise article provides AI Quantum Intelligence readers and subscribers with a pragmatic, thoughtful, and efficient model for the governance and oversight of Artificial Intelligence (AI) initiatives, suitable for the rapid evolution of generative and agentic models. This model—the Adaptive AI Integrity Framework (AAIF)—is designed to scale, integrating with existing organizational structures while providing the necessary guardrails for ethical, legal, and operational excellence.

Executive Summary

AI, particularly Generative and Agentic models, presents a unique challenge to traditional governance: it is fast-moving, highly technical, often non-deterministic, and capable of autonomous action. Traditional "red tape" governance slows innovation, while no governance risks catastrophic failure, legal liability, and brand damage.

The Adaptive AI Integrity Framework (AAIF) resolves this dichotomy. It provides a structured, lifecycle-based approach that shifts focus from reactive approval to proactive, embedded controls. It operates on the principle that governance is not a roadblock but the necessary steering mechanism required to accelerate safely.

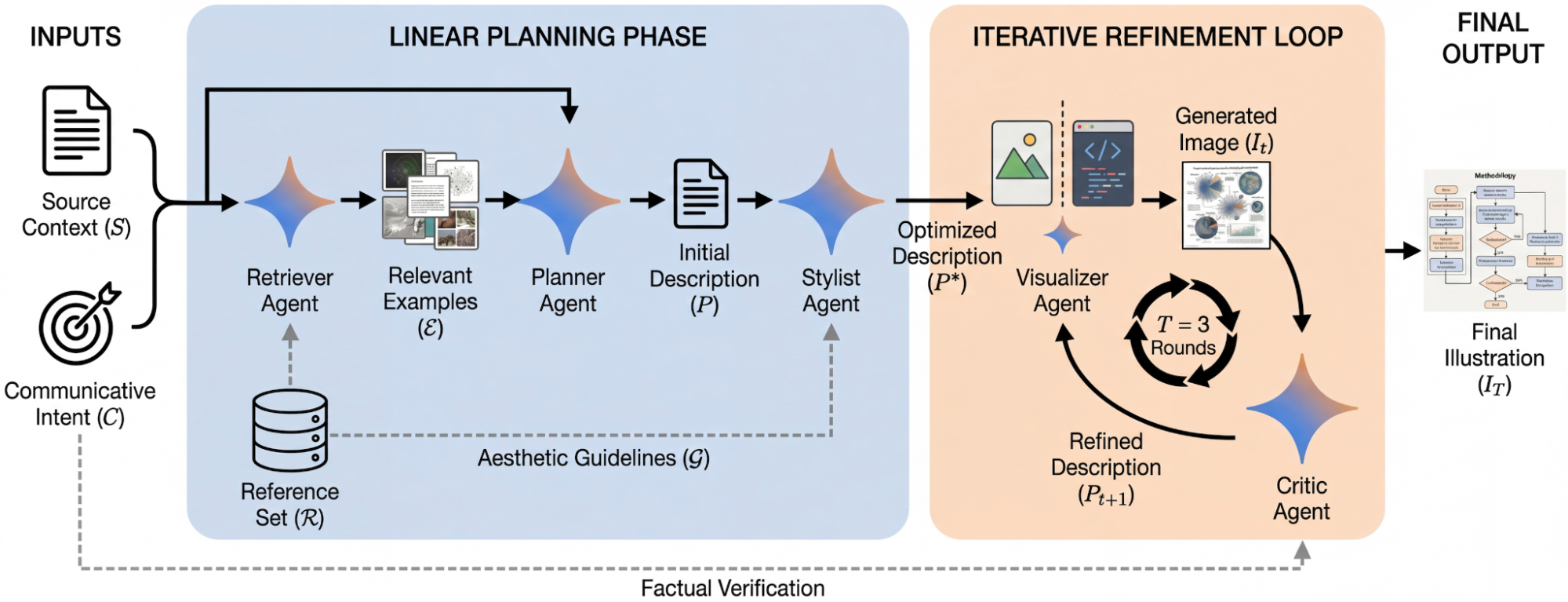

The Adaptive AI Integrity Framework (AAIF)

The AAIF is built on four intersecting dimensions, forming a continuous cycle of trust: Strategy & Ethics, Risk & Compliance, Lifecycle Execution, and Performance & Monitoring.

Visual 1: The Core AAIF Structure

This illustration provides a high-level overview of the four primary pillars of the model, emphasizing their cyclic and interconnected nature. The center "Trust & Agility" core represents the ultimate organizational goal: navigating speed and safety simultaneously.

-

Pillar 1: Strategy & Ethics (Define the 'Why' and 'Should'). This pillar establishes the organizational AI principles (e.g., fairness, transparency, accountability). It aligns AI investments with core business goals and defines the ethical boundaries the organization will not cross, even if technically feasible.

-

Pillar 2: Risk & Compliance (Define the 'Must' and 'Safeguard'). This function translates ethical principles into enforceable policies. It conducts risk assessments (Low, Medium, High) for every AI project, identifying regulatory requirements (like GDPR or the EU AI Act) and defining technical guardrails (e.g., data privacy controls, bias mitigation steps).

-

Pillar 3: Lifecycle Execution (Embed Governance in 'How'). Governance is integrated into the entire product development lifecycle (DevOps/MLOps). This means checks are automated within the development pipeline (e.g., automatic bias testing before model deployment, code reviews for agentic decision logic). It is the realization of "governance by design."

-

Pillar 4: Performance & Monitoring (Observe the 'Is'). Once deployed, AI must be continuously monitored. This pillar tracks model performance (accuracy drift, hallucination rates for GenAI) and audit logs for agentic actions (decisions made by agents, external API calls), alerting appropriate human owners to anomalies or policy violations.

Implementation & Organizational Nuances

The power of the AAIF lies in its scalability. It is not a rigid prescription, but a framework adapted to organizational context.

Organizational Size and Complexity

The primary variable in implementation is complexity, often correlated with size.

-

For Small-to-Midsize Businesses (SMBs): Efficiency is paramount. The model is implemented by a small, cross-functional AI Steering Committee (e.g., CEO, CTO, Legal Counsel) meeting monthly. A single "AI Champion" often manages both the Risk and Lifecycle functions. Documentation is streamlined; governance uses light touchpoints focused on high-risk areas. Automation is essential to scale minimal manpower.

-

For Large Enterprises: Complexity demands specialization. A dedicated AI Governance Council establishes policies implemented by specialized units: an Ethics Board, an AI Risk Management function, and dedicated MLOps teams handling Pillar 3. This matrixed responsibility requires strong orchestration platforms (Pillar 4 dashboards are critical) to maintain visibility.

Industry-Specific Applications

Different industries emphasize different pillars based on risk tolerance and regulation.

-

Highly Regulated (e.g., Finance, Healthcare, Aerospace): Pillars 2 (Risk) and 4 (Monitoring) are heavily resourced. Explainability (knowing why a model made a decision) and human-in-the-loop (HITL) requirements for agentic actions are high priority. Auditing and compliance reporting must be immaculate.

-

Technology & Creative (e.g., Software, Entertainment): Pillar 1 (Strategy & Ethics) takes precedence, focusing on intellectual property, copyright (especially for GenAI), and avoiding unintended bias. Speed (Pillar 3) is a competitive advantage; automation within the dev lifecycle is crucial.

-

Manufacturing & Logistics: Pillar 3 (Lifecycle Execution) and 4 (Monitoring) are vital for operational technology. Governance focuses on the reliability and safety of agentic systems interacting with the physical world.

Visual 2: The Three-Tier AI Oversight Structure

Effective implementation requires translating these abstract pillars into defined human roles. The following diagram illustrates a scalable oversight structure (the "Three Tiers"), showing the shift from strategic intent to operational reality.

The Three-Tier Oversight Model (Visual 2 Description):

-

Tier 1: Strategic Oversight (e.g., C-Suite, Board, Steering Committee). This top layer defines the "Should" and "Why." They set the ultimate risk tolerance, approve major AI investments, and define the ethical North Star for the enterprise. They define success (e.g., ROI, market share, ethical leadership).

-

Tier 2: Operational Management (e.g., AI Governance Council, specialized Risk officers). This crucial middle layer translates the high-level strategy (Tier 1) into actionable policies, risk frameworks, and compliance checklists. They review Medium- and High-Risk projects, manage the portfolio of AI initiatives, and select the technological tools for automated monitoring (Pillar 4).

-

Tier 3: Execution Teams (e.g., Data Scientists, ML Engineers, Product Managers). This bottom layer focuses on "How." They build and deploy the models, but they do so within the guardrails established by Tier 2. Their MLOps (Machine Learning Operations) pipeline includes the integrated governance gates (like automatic bias testing) that make the AAIF efficient.

Conclusion

The Adaptive AI Integrity Framework provides organizations with a path to responsible AI deployment without sacrificing velocity. By integrating governance into the product lifecycle and defining clear, scalable oversight structures, organizations can build the trust necessary to realize the full transformative potential of generative and agentic AI.

Implementation Roadmap: Activating the AAIF

Phase-Based Integration for Scalable AI Governance

This roadmap provides a 90-day accelerated path to operationalizing the Adaptive AI Integrity Framework (AAIF). It transitions governance from a theoretical concept to a functional business asset.

Phase 1: Foundation & Alignment (Days 1–30)

Goal: Establish the Tier 1 and Tier 2 oversight structures and define core principles.

-

Establish the AI Steering Committee: Appoint executive sponsors (Tier 1) to define the organization’s "AI North Star" and risk appetite.

-

Draft the Ethical Charter: Formalize a concise set of "AI Non-Negotiables" (e.g., data privacy standards, transparency requirements).

-

Inventory & Categorize: Audit existing AI pilots and tools. Assign a risk level (Low, Medium, High) to each based on data sensitivity and autonomy.

-

Success Metric: Approved AI Ethics Charter and an established Governance Council.

Phase 2: Integration & Automation (Days 31–60)

Goal: Embed governance into the technical lifecycle (Tier 3) and select oversight tools.

-

Define "Gate" Requirements: Establish specific requirements for moving a model from "Development" to "Deployment" (e.g., mandatory bias testing for HR agents).

-

Deploy MLOps Guardrails: Integrate automated checks into your software pipeline. For agentic AI, implement "Circuit Breakers"—predefined conditions where an agent must pause for human approval.

-

Select Monitoring Platforms: Identify tools for Pillar 4 (Performance & Monitoring) that can track model drift and "hallucination" rates in real-time.

-

Success Metric: The first AI project successfully passes through an automated "Governance Gate."

Phase 3: Operationalization & Scaling (Days 61–90+)

Goal: Full deployment of the AAIF across the enterprise with continuous feedback loops.

-

Rollout Training: Conduct role-specific training for developers (technical guardrails) and business users (ethical use and reporting).

-

Activate Live Monitoring: Launch the Pillar 4 dashboard for all high-risk AI deployments to ensure real-time visibility for the Governance Council (Tier 2).

-

Feedback Loop Implementation: Establish a monthly "Governance Retrospective" to adjust policies based on performance data and evolving regulations.

-

Success Metric: 100% of new AI initiatives managed within the AAIF; zero high-priority policy violations.

Roadmap Adaptation by Organization Type

| Industry / Size | Roadmap Nuance |

| SMBs / Startups | Focus heavily on Phase 1 (Strategy) and use lightweight, manual checks in Phase 2 to maintain speed. |

| Highly Regulated | Extend Phase 2 by 30 days for rigorous legal/compliance verification and third-party audits. |

| Enterprises | Prioritize Phase 3 scaling, utilizing automated platforms to manage thousands of model instances simultaneously. |

Guided by insights from AI Quantum Intelligence and published with the help of AI models for the benefit of our readers.

What's Your Reaction?

Like

0

Like

0

Dislike

0

Dislike

0

Love

0

Love

0

Funny

0

Funny

0

Angry

0

Angry

0

Sad

0

Sad

0

Wow

0

Wow

0